There’s a specific kind of pain that every AI builder hits eventually.

You spent weeks building an agent. The demo was clean. The client loved it. You handed it over — and within days, things started going sideways. The agent forgot earlier decisions. Contradicted itself. Repeated mistakes you’d already fixed. Eventually stopped being useful entirely.

You went back to the model. Swapped it. Tweaked prompts. Added more instructions. Nothing held.

This isn’t a model problem. This is a context problem. And it’s the single most common reason AI agents fail outside of a demo environment.

Market research consistently documents that most AI projects don’t make it to successful production. The RAND Corporation documented that more than 80% of AI projects fail — double the failure rate of conventional IT projects. For AI agents specifically, the rate tends to be even higher because the operational demands are more complex: autonomous, multi-step decisions on real data.

Communities like r/ArtificialIntelligence and r/SideProject are full of threads on the same theme: agents that work in demos and break in the first week of real use.

Context engineering is the discipline that addresses this gap.

Why Your Agent Worked in the Demo and Breaks in Production

When an AI agent fails, the instinctive response is to swap the model. GPT-4 to Claude. Claude to Gemini. Gemini to whatever launched last week.

The failure persists. Because the model was never the problem.

Every LLM operates on a context window — a block of tokens containing system instructions, conversation history, retrieved documents, tool definitions, and user data. That block is everything the model has to work with. The model cannot reach outside it. It responds based solely on what’s in that window.

When the window is poorly designed — overloaded with irrelevant instructions, missing critical information, accumulating contradictory history, bloated with tool schemas that consume thousands of tokens before a single useful task runs — the model degrades.

Not because it’s a bad model. Because it’s working with a bad information environment.

Anthropic’s engineering team documented this directly: “Most production AI failures are context failures, not model failures.”

What Context Failures Look Like in Practice

- The agent ignores instructions defined early in the conversation because they’ve scrolled out of the effective attention range

- A multi-domain agent handling billing questions pulls in customer support context that doesn’t apply, generating confused responses

- A long-running agent that’s been active for hours starts contradicting decisions made in the first session — because the relevant history was truncated

- An agent with 90 connected tools burns 50,000 tokens in overhead before a user types a single word

These aren’t edge cases. They’re the default failure mode of agents built without attention to context architecture.

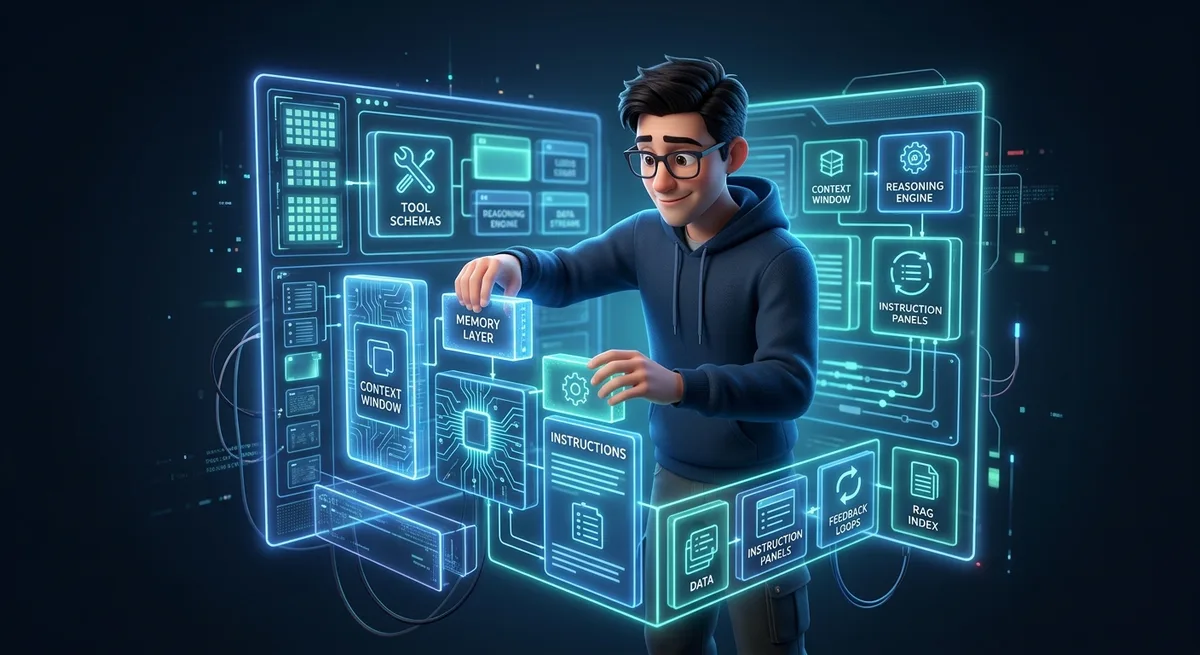

What Is Context Engineering

Context engineering is the practice of designing the complete information environment that an AI agent operates within — determining what it knows, when it knows it, and in what form it receives that knowledge throughout its operational lifecycle.

It’s not prompt engineering. Prompt engineering optimizes a single question. Context engineering defines the infrastructure layer that determines how your agent behaves across thousands of interactions over weeks of real use.

The components:

- system instructions

- retrieved documents

- conversation history

- tool definitions

- structured memory

- user-specific data

A well-crafted prompt improves one response. Well-implemented context engineering makes your entire agent behave consistently across the full span of real usage — without you watching over it.

For a solo builder, this is the difference between an agent you have to babysit and an agent that earns its place in your stack.

5 Patterns That Separate Working Products from Impressive Demos

The practice of context engineering in production systems has consolidated around five patterns that appear consistently in the most robust implementations. Here they are, translated into solo builder terms.

1. Progressive Disclosure — Load Context On Demand

The most expensive mistake: dumping every capability into the system prompt upfront.

If your agent can do 20 different things but a given session only involves 2, you’re spending tokens on 18 irrelevant instruction sets that the model processes regardless — increasing latency, burning budget, and reducing response quality.

Progressive disclosure solves this in three layers:

- Discovery — the agent knows the name and description of each capability

- Activation — full instructions load only when a capability is triggered

- Execution — task-specific scripts load during active execution

Anthropic’s agent skills format — markdown files with YAML frontmatter — was adopted by OpenAI, Google, GitHub, and Cursor within weeks of its December 2025 release. If you use Claude Code, you’re already working within this model.

Implementation: Structure your CLAUDE.md as modular sections. Keep the main file lean. Put capability-specific instructions in separate files, loaded via MCP or RAG references only when the relevant task is active.

<!-- CLAUDE.md with progressive disclosure structure -->

# Core Identity

You are a business assistant for [project name].

## Available capabilities

- /customers — manage customer records

- /reports — generate financial summaries

- /support — handle support tickets

<!-- Full instructions for each capability live in separate files -->

<!-- Loaded via MCP or RAG only when activated -->

Direct gain: 40–60% token reduction in focused sessions.

2. Context Compression — Manage Long History Without Losing Thread

Agents that run for hours or days accumulate conversation history. That history fills the context window. After enough time, the beginning of the conversation drops out — and the agent “forgets” decisions made earlier.

The naive fix: truncate. The result: the agent loses continuity and starts contradicting earlier commitments.

Context compression uses two techniques together:

- Sliding window: keeps the last N messages in full (preserves the model’s conversational rhythm)

- LLM summarization: compresses older messages into structured summaries that preserve decisions, constraints, and error records

The non-negotiable rule: always preserve error traces. If the agent tried something and it failed, that record must survive compression. Without it, the agent repeats the same mistakes indefinitely.

Implementation with n8n:

Workflow structure: two Code nodes with an HTTP Request between them. Code node 1 splits messages into recent and older. HTTP Request sends

olderto an LLM (e.g., GPT-4o-mini — dirt cheap for summarization). Code node 2 assembles the final array with the injected summary.

// Code node 1/2 — split context window

// Output 'older': HTTP Request node to LLM → returns summary

// Output 'recent': goes directly to Code node 2

const items = $input.all();

const messages = items.map(item => item.json);

const windowSize = 10;

const recent = messages.slice(-windowSize);

const older = messages.slice(0, -windowSize);

if (older.length > 0) {

$output.first().item.json = {

recent,

count: older.length

};

return older.map(m => ({ json: { ...m, _compress: true } }));

}

$output.first().item.json = {

recent: messages,

count: 0

};

return [];

// Code node 2/2 — inject summary into context

// Input 1: $input (recent messages)

// Input 2: $('HTTP Request') (LLM summary in choices[0].message.content)

const item = $input.first().json;

const recent = item.recent;

const recentAsSystem = recent.map(m => ({

json: {

role: m.role || 'user',

content: m.content || JSON.stringify(m)

}

}));

let priorContext = '';

const llmResponse = $('HTTP Request').first().json;

if (llmResponse && llmResponse.choices && llmResponse.choices[0]) {

priorContext = llmResponse.choices[0].message.content;

}

return [

{ json: { role: 'system', content: `## Prior Context Summary\n\n${priorContext}\n\n---\n\n## Recent Session` } },

...recentAsSystem

];

HTTP Request node configuration:

- Method: POST

- URL:

https://api.openai.com/v1/chat/completions(or your provider endpoint)- Body:

{ "model": "gpt-4o-mini", "messages": [ {"role": "system", "content": "You are a conversation summarizer. You will receive a message history. Return a structured summary that preserves: decisions made, errors mentioned, problem context, and stated preferences. Format: ## Decisions\n## Errors to Avoid\n## Context\n## Preferences"}, {"role": "user", "content": "History:\n" + $input.all().map(i => JSON.stringify(i.json)).join('\n') } ] }- Headers:

Authorization: Bearer YOUR_API_KEY,Content-Type: application/json

3. Context Routing — Direct Queries to the Right Context Set

Multi-domain agents — handling customer support, billing, onboarding, and product questions simultaneously — suffer from context contamination. A billing question pulls in support instructions that don’t apply, generating muddled responses.

Context routing classifies the incoming query before anything else and loads only the relevant context set for that domain.

Two practical models:

- Rule-based routing: simple keyword or pattern matching (fast, cheap, reliable for predictable cases)

- LLM-powered routing: a smaller model classifies intent and routes to the right specialized agent (more precise for ambiguous inputs)

The hybrid approach works best: rules handle 70–80% of cases, LLM handles the rest.

Solo builder application: if you’re building an agent for a micro-SaaS, separate the contexts for technical support, billing, and onboarding. The agent answers better when it operates in the right context — not in a soup of every instruction at once.

Concrete example in n8n: routing workflow for a micro-SaaS assistant with 3 domains.

// Code node — intent classifier

// Input: $input.first().json.message (user's text)

// Output: json with { routing: 'support' | 'billing' | 'onboarding' }

const message = $input.first().json.message || '';

const lower = message.toLowerCase();

// Billing patterns

const billingPatterns = /refund|charge|invoice|payment|plan|price|upgrade|downgrade|cancel subscription/i;

if (billingPatterns.test(message)) {

return [{ json: { routing: 'billing', confidence: 'high' } }];

}

// Onboarding patterns

const onboardingPatterns = /getting started|first step|install|setup|new account|create|how to begin/i;

if (onboardingPatterns.test(message)) {

return [{ json: { routing: 'onboarding', confidence: 'high' } }];

}

// Support patterns

const supportPatterns = /bug|error|not working|issue|crash|failure|screen|blocked/i;

if (supportPatterns.test(message)) {

return [{ json: { routing: 'support', confidence: 'high' } }];

}

// Fallback: LLM classifier for ambiguous cases

return [{ json: { routing: 'support', confidence: 'low', needs_llm_classify: true } }];

n8n’s Switch node uses the routing field to direct the flow to the correct sub-agent. If confidence is ’low’, it first passes through a cheap LLM classifier (GPT-4o-mini) before routing.

4. Agentic RAG — Dynamic Retrieval vs. Fixed Pipelines

Standard RAG: user sends a query, system retrieves relevant documents, injects them into context, model responds. Linear pipeline, fixed flow.

The production problem: complex queries don’t resolve in a single retrieval pass. The agent needs to fetch, evaluate the quality of what it got, reformulate the query, fetch again, and synthesize across multiple passes.

Agentic RAG puts the agent in control of the retrieval loop:

- The agent decides whether it needs to retrieve information

- It reformulates the query to maximize relevance

- It evaluates the quality of what was retrieved

- It decides whether another retrieval pass is needed before responding

Combined with graph-based reasoning — a technique that connects information across documents via a knowledge graph rather than treating each document as an isolated unit — the accuracy improvement for complex use cases is substantial.

Practical entry point: Supabase with pgvector covers 90% of solo builder use cases. Free tier works for development. Pro plan ($25/month) handles moderate production load.

-- Enable pgvector extension (once per database)

create extension if not exists vector;

-- Documents table for RAG

create table documents (

id uuid primary key default gen_random_uuid(),

content text,

embedding vector(1536), -- dimensions for text-embedding-3-small (OpenAI) or equivalent

metadata jsonb,

created_at timestamp default now()

);

-- ivfflat index: approximate nearest-neighbor search (faster than exact search at scale)

-- lists = 100 is appropriate for up to ~1 million vectors

create index on documents using ivfflat (embedding vector_cosine_ops)

with (lists = 100);

5. Tool Management — The Hidden Cost of JSON Schemas

MCP (Model Context Protocol), now governed by the Linux Foundation, standardized how agents connect to external tools. Great for interoperability. There’s a hidden cost most builders never account for.

A single complex JSON schema consumes 500+ tokens. With 90 tools connected, you’re consuming over 50,000 tokens in overhead before the user types a single character. That’s real API cost and real latency, before any useful work happens.

The fix is the same progressive disclosure principle applied to tools:

- Discovery phase: the agent knows only tool names and descriptions

- Activation phase: full schema loads only when the tool is about to be used

- Periodic audit: remove tools rarely used or that can be merged into broader ones

Practical rule: if you have more than 20 tools connected, you’re almost certainly paying for context that generates no value. Audit quarterly.

Implementation Stack for Today

You don’t need complex infrastructure to start. This stack covers all 5 patterns with tools most solo builders already use. Estimated setup from scratch: 4–8 hours.

CLAUDE.md as Your Context Foundation

Your CLAUDE.md is your native progressive disclosure system. Structure it with:

- Core identity section (always loaded)

- Separate capability sections (loaded on demand via reference)

- Explicit routing instructions

- Known error traces and how to avoid them

MCP for Standardized Connections

Use MCP to connect your agent to external tools. Document each tool with a precise description of when to use it — that’s what drives automatic routing.

Prioritize MCP servers with lean schemas. Prefer specialized tools over generic ones that try to do everything.

n8n for Orchestration and Routing

n8n is where routing and compression happen in practice. From a single workflow you can:

- Classify incoming queries before they reach the LLM

- Automatically compress conversation history

- Route to specialized agents based on detected intent

- Run agentic RAG loops with decision nodes

Supabase + pgvector for RAG

For most solo builder use cases, Supabase with pgvector is sufficient. Free tier covers development. Pro plan handles moderate production usage.

5 Ways to Monetize Context Engineering

Context engineering isn’t just a technical skill. It’s a commercial asset — and right now the market is early enough that knowing it well is a genuine differentiator.

1. Sell Structured CLAUDE.md Templates and System Prompts

The market for “context configurations” is forming now. Builders who need reliable agents for specific use cases — customer support, project management, data analysis — will pay for a working setup that someone else has already debugged.

Well-structured CLAUDE.md templates for specific niches sell for $19 to $99 on Gumroad, Lemon Squeezy, and similar platforms.

The differentiator is specificity. Not “generic agent template” — but “context engineering setup for a content agency” or “CLAUDE.md for B2B SaaS support automation.”

How to get your first buyers: post a thread on Reddit (r/SideProject, r/Entrepreneur) or on Twitter/X showing a before-and-after of an agent with and without proper context setup. A post demonstrating the real problem converts better than any sales page.

2. Context Engineering Setup Service

As the market for “AI agents for small businesses” grows, most small companies hire someone to build the agent and discover it breaks in production. There’s no one to fix the context layer.

You can position yourself at exactly that gap: as a context engineering specialist who makes existing agents production-stable. Clear value proposition: “your agent doesn’t work reliably — I’ll fix the context layer.”

Pricing: $500 to $2,000 per project, depending on complexity. For clients needing ongoing maintenance: $200–$500/month retainers.

How to find your first client: search startup Slack groups, builder Discord communities, and LinkedIn for posts complaining that “my agent stopped working” or “my automation broke.” Offer a free context audit as a lead-in — most people accept because the problem is real and few know how to diagnose it.

3. Build Micro-SaaS with Reliable Agents

The real competitive advantage of mastering context engineering: you can build products with agents that compete with SaaS tools costing 10x more — built by entire teams.

A micro-SaaS support automation product with a reliable agent can charge $99–299/month per client. If you’ve solved context engineering, your agent quality competes with enterprise solutions — at solo builder margins.

How to validate before building: identify a vertical where agents break often (support, data extraction, onboarding). Deliver it manually for the first 3 clients and only automate once the problem — and the payment — are confirmed.

4. API Cost Reduction as a Billable Value

If you already have clients running agents, properly implemented context engineering can cut their API costs 40–60%. That’s direct margin improvement.

For agencies building automations for clients: lower costs mean higher margin. Or it becomes a clear value proposition for clients complaining about operational expenses.

How to present it: calculate the client’s current API spend, project what it would be with context engineering applied, and present the delta as ROI. Clients paying per token understand this argument immediately.

5. Charge Premium for Automations That Don’t Break

In the automation market, the gap between something that works for 3 months and something that works for 3 years is enormous. Context engineering is a core reason the second scenario is achievable.

If you can demonstrate a track record of agents that stay reliable in production, you have justification for premium pricing — and for recurring maintenance contracts that generate predictable monthly revenue.

How to build the track record: document publicly. A monthly post on LinkedIn or Twitter showing real metrics — “agent ran X hours without a failure, cost $Y in API” — builds credibility before any formal portfolio exists.

Where to Start

Context engineering isn’t a skill you learn in a tutorial and immediately master. It deepens with real production exposure.

The most efficient starting path:

Apply progressive disclosure to your next CLAUDE.md. This single pattern resolves most agent inconsistency problems immediately — and takes less than an hour to implement.

Add a context compression node to your main n8n workflow. It doesn’t need to be sophisticated. The basic pattern makes a measurable difference in long-running agents.

Audit your connected MCP tools. How many do you actually use in active workflows? How many are consuming token budget with no return?

Run a single RAG experiment with Supabase + pgvector. You probably have a document set that your agent should be retrieving dynamically instead of having pasted into the system prompt.

Each of these takes less than a day. Combined, they close the gap between a demo that impressed someone and an agent that keeps working without you watching it.

Frequently Asked Questions

Is context engineering the same as prompt engineering?

No. Prompt engineering optimizes a specific question. Context engineering designs the full information infrastructure that an agent operates within over time. A good prompt improves one response. Good context engineering makes your agent behave consistently across weeks of real use.

With models offering 200K+ token context windows, do I still need to worry about this?

Yes — and the reason is counterintuitive. First, quality degradation happens before the window fills. Models degrade in attention and precision well before hitting the token limit because more context makes it harder for the model to distinguish signal from noise. Second, compounding cost: if you make 1,000 API calls per day, even a 10% reduction in tokens per call is meaningful at scale. Third, latency: every context token adds processing time. An agent that processes 50,000 tokens of overhead before any useful work runs has noticeably higher latency than one processing 5,000. For hobby projects, you can defer this. For production, these small inefficiencies compound.

Do I need to be a developer to implement these patterns?

Not in the traditional sense. All 5 patterns can be implemented with tools an intermediate solo builder already uses: CLAUDE.md, n8n, Supabase, MCP. The highest-impact pattern — progressive disclosure — starts with how you structure your configuration files.

Do these patterns work with models other than Claude?

Yes. Context engineering patterns are model-agnostic — they work with GPT-4, Gemini, and local models via Ollama. Implementation specifics vary, but the principles hold across all LLMs.

Which pattern should I implement first?

Progressive disclosure. It has the highest impact, lowest implementation barrier, and produces visible results immediately. Context compression is second priority, especially if you operate agents on long tasks or multi-turn conversations.

Is this relevant if my main automation platform is n8n?

Especially relevant. n8n’s decision nodes map directly to context routing logic. Orchestrating multiple specialized agents — each with its own optimized context — is exactly what n8n does well. Adding context compression upstream of your LLM calls is a natural n8n workflow extension.